Transition Legacy Neural Network Code to dlnetwork Workflows

Legacy neural network functionality (for example, functionality that relates to the

network object) will be removed in a future

release. Use dlnetwork objects and related

functionality instead.

Legacy neural network objects are outdated and are not well suited for modern workflows.

Some of the functionality being removed was introduced before R2006a (for example, network objects and the train function). Using dlnetwork objects (introduced in R2019b) and related features such as the

trainnet

function (introduced in R2023b) instead is recommended and offers these advantages:

dlnetworkobjects support a wider range of network architectures, which you can train using thetrainnetfunction or import from external platforms.dlnetworkobjects provide more flexibility and have wider support with Deep Learning Toolbox™ functionality.dlnetworkobjects provide a unified data type that supports network building, prediction, built-in training, compression, Simulink®, code generation, verification, and custom training loops.

For tabular data workflows, you can train neural networks using the fitrnet (Statistics and Machine Learning Toolbox) and

fitcnet (Statistics and Machine Learning Toolbox)

functions and convert them to dlnetwork

objects.

mdl = fitrnet(X,T); net = dlnetwork(mdl);

For other types of data or additional flexibility, you can train a neural network using

the trainingOptions and trainnet

functions.

options = trainingOptions("adam"); net = trainnet(X,T,layers,"mse",options);

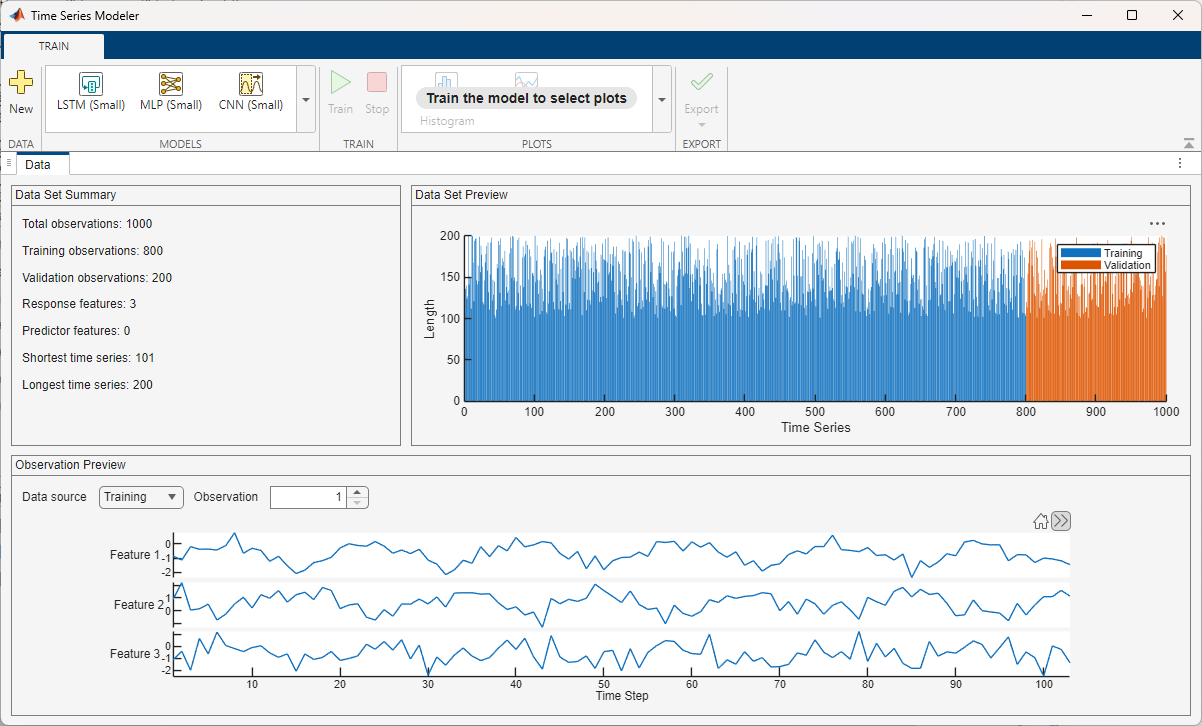

Alternatively, you can recreate your workflow interactively in apps such as the Time Series Modeler, Deep Network Designer, Regression Learner (Statistics and Machine Learning Toolbox), and Classification Learner (Statistics and Machine Learning Toolbox) apps and then export your model.

You can use these apps for deep learning workflows:

Classification Learner (Statistics and Machine Learning Toolbox)

Regression Learner (Statistics and Machine Learning Toolbox)

To update your code, the best approach is usually to take code from examples and reference pages and adapt it for your task. You can use these examples to get started:

This table provides recommendations for updating your code. For more specific information, refer to the reference page of the affected functionality.

Note

The training options, model architectures, and algorithms that these examples use are well suited to use or adapt for most tasks. For greater flexibility or to reproduce algorithms exactly, you can implement a custom training loop. For more information about custom training loops, see Custom Training Loops.

| Category | Function or Object to be Removed | Recommendation |

|---|---|---|

| Apps | nnstart |

These apps and Live Editor tasks are recommended for deep learning workflows instead:

|

Classification Learner (Statistics and Machine Learning Toolbox) app | ||

Regression Learner (Statistics and Machine Learning Toolbox) app | ||

Cluster Data (Statistics and Machine Learning Toolbox) Live Editor task | ||

| Time series data workflows |

|

To learn more about time series data workflows, see these examples and topics:

|

| Network building |

|

To design and customize your own neural network for these workflows, you can create a network using an array of deep learning layers or a

|

| Training and prediction |

To learn more about neural network training and prediction, see these examples and topics:

| |

| Visualization |

|

To learn more about visualization workflows, see these examples and topics:

|

| Data processing |

|

To learn more about data processing for neural networks, see these examples and topics:

|

| Transfer and activation functions | compet, elliotsig, elliot2sig, hardlim, hardlims, logsig, netinv, netprod, netsum, poslin, purelin, radbas, radbasn, satlin, satlins, softmax, tansig |

To learn more about using activation and transfer functions in neural networks, see these examples and topics:

|

| Metrics and loss functions |

To learn more about using metrics and loss functions when you train neural networks, see these examples and topics:

| |

| Simulink and code generation |

|

To learn more about Simulink and code generation for neural networks, see these examples and topics:

|

| Mathematical operations |

|

To learn more about supported mathematical and deep learning operations and layers, see these examples and topics:

|

| Autoencoders |

|

To learn more about autoencoder workflows, see these examples and topics:

|

| Self-organizing maps |

|

To learn more about self-organizing map workflows, see these examples and topics:

|

| Pruning |

To learn more about pruning and other neural network compression workflows, see these examples and topics:

| |

| Weights and bias functions |

|

To learn more about initializing and customizing neural network learnable parameters, see these examples and topics:

|

| Training and learning algorithms |

|

To learn more about customizing training algorithms, see these examples and topics:

|

| Derivative functions |

|

To learn more about automatic differentiation workflows, see these examples and topics:

|

See Also

fitrnet (Statistics and Machine Learning Toolbox) | fitcnet (Statistics and Machine Learning Toolbox) | trainnet | trainingOptions | dlnetwork | Time Series

Modeler | Deep Network

Designer | Classification Learner (Statistics and Machine Learning Toolbox) | Regression Learner (Statistics and Machine Learning Toolbox)