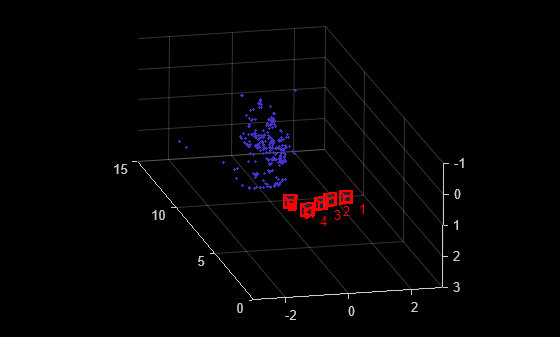

triangulateMultiview

3-D locations of world points matched across multiple images

Syntax

Description

worldPoints = triangulateMultiview(pointTracks,cameraPoses,intrinsics)pointTracks

specifies an array of matched points. cameraPoses and

intrinsics specify camera pose information and intrinsics,

respectively. The function does not account for lens distortion.

[

additionally returns the mean reprojection error for each 3-D world point using all

input arguments in the prior syntax.worldPoints,reprojectionErrors]

= triangulateMultiview(___)

[

additionally returns the indices of valid and invalid world points. Valid points

are located in front of the cameras.worldPoints,reprojectionErrors,validIndex]

= triangulateMultiview(___)

Examples

Input Arguments

Output Arguments

Tips

Before detecting the points, correct the images for lens distortion by using by using the

undistortImage function. Alternatively,

you can directly undistort the points by using the undistortPoints function.

References

[1]

Version History

Introduced in R2016aSee Also

Apps

Functions

undistortImage|estimateCameraParameters|bundleAdjustment|bundleAdjustmentMotion|bundleAdjustmentStructure|undistortPoints|estrelpose