Object Detector Analyzer

Interactively visualize and evaluate object detection results against ground truth

Since R2026a

Description

The Object Detector Analyzer app enables you to visualize and evaluate object detection results against ground truth data. Using the app, you can:

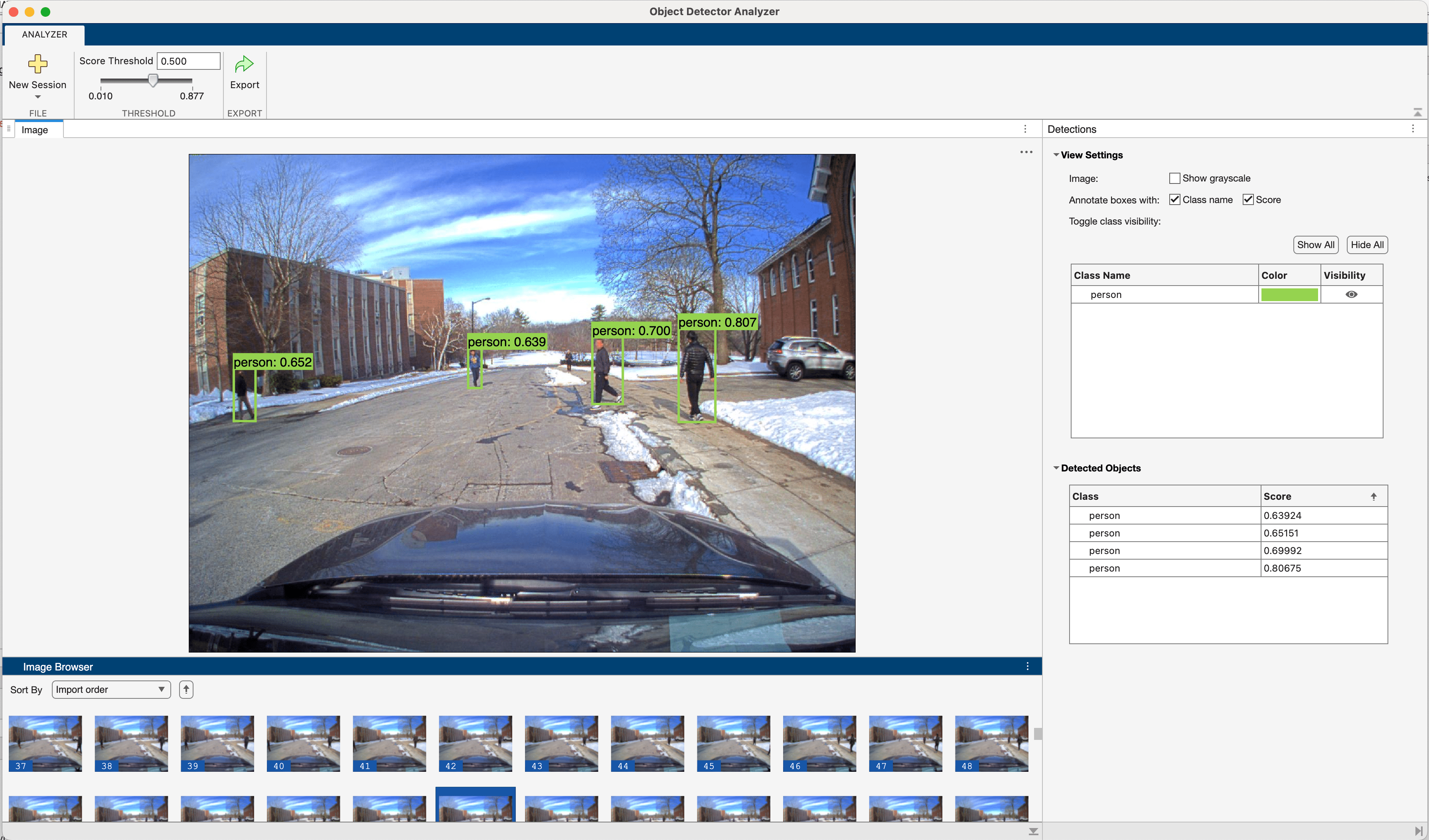

Evaluate object detection performance either without ground truth data or by comparing detections to ground truth and generating performance metrics. To get started, see Get Started with Object Detector Analyzer App.

Run a pretrained object detector in the app, or import precomputed object detection results from the workspace. For a list of supported object detectors to run in the app, see Run Supported Object Detectors. For information about the format of precomputed detection results, see Import Precomputed Detection Results.

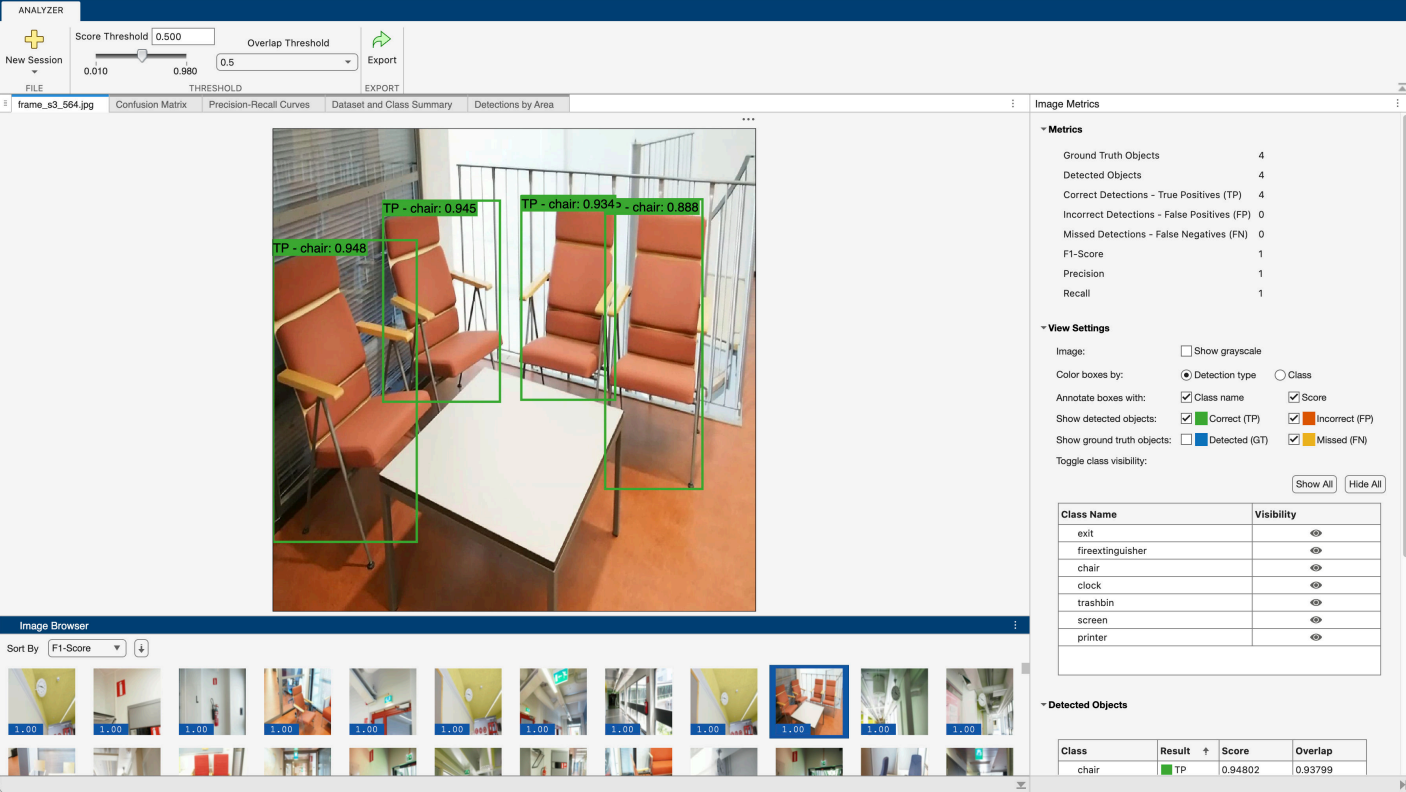

Visualize and compare detections and ground truth annotations in an interactive image browser. Inspect individual detections, and overlay their confidence scores, false positives, and false negatives overlaid on images.

Compute and visualize detector performance metrics: precision-recall curves, confusion matrices, AP, mAP, and mLAMR across data sets, classes, and overlap thresholds, and detection performance by object area. For more information on performance metrics, see Evaluate Object Detector Performance.

Interactively adjust the detection threshold and overlap (IoU) threshold to analyze how stricter or more lenient thresholds impact detector performance, enabling you to tune your detector for the optimal trade-off between false negatives and false positives.

Export all detections or filtered results to the workspace for further analysis.

Export computed performance metrics as an

objectDetectionMetricsobject. You can use this object to create custom visualizations, compare different detector models, or perform further performance analysis.

To learn more about this app, see Get Started with Object Detector Analyzer App.

Open the Object Detector Analyzer App

MATLAB® Toolstrip: On the Apps tab, under Image Processing and Computer Vision, click the app icon.

MATLAB command prompt: Enter

objectDetectorAnalyzer.

Examples

Related Examples

Programmatic Use

More About

Version History

Introduced in R2026a