estimateNetworkOutputBounds

Syntax

Description

Add-On Required: This feature requires the AI Verification Library for Deep Learning Toolbox add-on.

dlnetwork bounds

[

computes lower and upper output bounds, YLower,YUpper] = estimateNetworkOutputBounds(net,XLower,XUpper)YLower and

YUpper, respectively, for the network net for

input within the bounds specified by XLower and

XUpper.

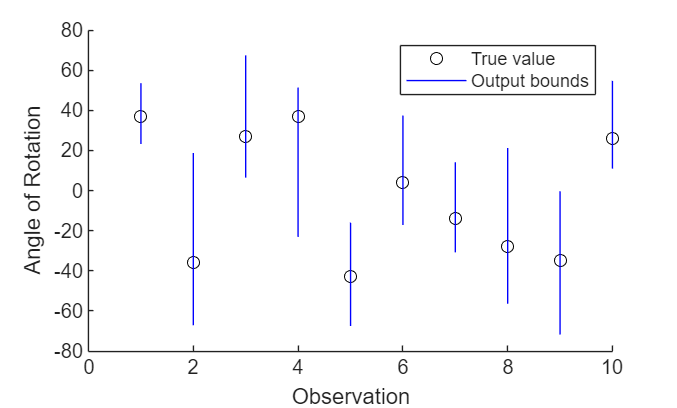

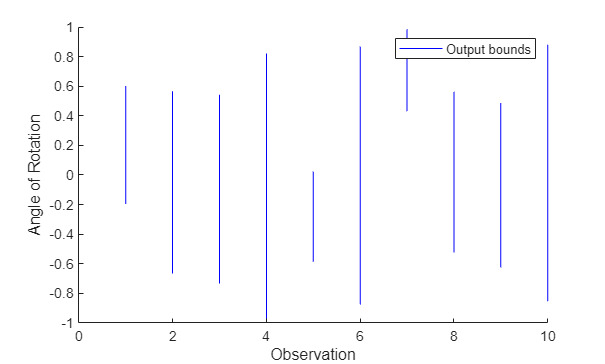

The function uses abstract interpretation to compute the range of output values that the network returns when the input is between the specified lower and upper bounds. Use this function to determine the sensitivity of the network predictions to input perturbations. Abstract interpretation can introduce overapproximations so these bounds may not be tight.

[

computes lower and upper output bounds with additional options specified by one or more

name-value arguments. YLower,YUpper] = estimateNetworkOutputBounds(___,Name=Value)

ONNX and PyTorch network bounds

This feature requires the Deep Learning Toolbox Interface for alpha-beta-CROWN Verifier add-on.

[

computes lower and upper output bounds with additional options specified by one or more

name-value arguments. YLower,YUpper] = estimateNetworkOutputBounds(___,Name=Value)

Examples

Input Arguments

Name-Value Arguments

Output Arguments

Algorithms

References

[1] Goodfellow, Ian J., Jonathon Shlens, and Christian Szegedy. “Explaining and Harnessing Adversarial Examples.” Preprint, submitted March 20, 2015. https://arxiv.org/abs/1412.6572.

[2] Singh, Gagandeep, Timon Gehr, Markus Püschel, and Martin Vechev. “An Abstract Domain for Certifying Neural Networks”. Proceedings of the ACM on Programming Languages 3, no. POPL (January 2, 2019): 1–30. https://doi.org/10.1145/3290354.

[3] Singh, Gagandeep, Timon Gehr, Markus Püschel, and Martin Vechev. “An Abstract Domain for Certifying Neural Networks.” Proceedings of the ACM on Programming Languages 3, no. POPL (January 2, 2019): 1–30. https://doi.org/10.1145/3290354.

[4] Zhang, Huan, Tsui-Wei Weng, Pin-Yu Chen, Cho-Jui Hsieh, and Luca Daniel. “Efficient Neural Network Robustness Certification with General Activation Functions.” arXiv, 2018. https://doi.org/10.48550/ARXIV.1811.00866.

[5] Xu, Kaidi, Zhouxing Shi, Huan Zhang, Yihan Wang, Kai-Wei Chang, Minlie Huang, Bhavya Kailkhura, Xue Lin, and Cho-Jui Hsieh. “Automatic Perturbation Analysis for Scalable Certified Robustness and Beyond.” arXiv, 2020. https://doi.org/10.48550/ARXIV.2002.12920.

[6] Xu, Kaidi, Huan Zhang, Shiqi Wang, Yihan Wang, Suman Jana, Xue Lin, and Cho-Jui Hsieh. “Fast and Complete: Enabling Complete Neural Network Verification with Rapid and Massively Parallel Incomplete Verifiers.” arXiv, 2020. https://doi.org/10.48550/ARXIV.2011.13824.