Deep Learning for Radar and Wireless Communications

Overview

Modulation identification and target classification are important functions for intelligent RF receivers. These functions have numerous applications in cognitive radar, software-defined radio, and efficient spectrum management. To identify both communications and radar waveforms, it is necessary to classify them by modulation type. For this, you can extract meaningful features which can be input to a classifier. While effective, this procedure can require effort and domain knowledge to yield an accurate identification. A similar challenge exists for target classification.

In this webinar, we will demonstrate data synthesis techniques that can be used to train Deep Learning and Machine Learning networks for a range of radar and wireless communications systems including:

- Understanding data set trade-offs between machine learning and deep learning workflows

- Efficient ways to work with 1D and 2D (time-frequency) signals

- Feature extraction techniques that can be used to improve classification results

- Application examples with Radar RCS identification, radar/comms waveform modulation ID, and Micro-Doppler signatures to help with target identification (for example, pedestrians, bicycles, aircraft with rotating blades)

- Validation with over-the-air signals from software-defined radios (SDR) and radars.

About the Presenter

Rick Gentile focuses on Phased Array, Signal Processing, and Sensor Fusion applications at MathWorks. Prior to joining MathWorks, Rick was a Systems Engineer at MITRE and MIT Lincoln Laboratory, where he worked on the development of radar systems. Rick also was a DSP Applications Engineer at Analog Devices where he led embedded processor and system level architecture definitions for high performance signal processing systems, including automotive driver assist systems. Rick co-authored the text “Embedded Media Processing”. He received a B.S. in Electrical and Computer Engineering from the University of Massachusetts, Amherst and an M.S. in Electrical and Computer Engineering from Northeastern University, where his focus areas of study included Microwave Engineering, Communications and Signal Processing.

Recorded: 21 May 2020

Hello, my name is Rick Gentile. I'm the product manager for Radar and Sensor Fusion tools at MathWorks. Welcome to this webinar on deep learning for radar and wireless communications. After a quick introduction, I'll briefly discuss the tools related to deep learning you can use with MATLAB.

I'll then discuss ways you can interface the software to find radios and radars. We can then look at how you can pre-process and label this type of data. And, finally, I'll go through four examples that are based on a combination of data synthesis, and data we collected in a range of classification based applications. So let's get started.

There are many tools and algorithms you can use for MathWorks for radar system design. You can design, model, simulate, and test everything from the antenna and RF components, to the signal and data processing systems. This includes things like tracking and sensor fusion as well.

You can also model the environment that radars operate in, including terrain, clutter, targets, and interference. In this webinar, I'll focus on using these capabilities to train deep learning networks. Similar to what I describe for radar, you can design, model, simulate, and test wireless systems, including 5G systems. In this session, I'll focus on waveforms, channel models, and impairments to generate data to train deep learning networks as well.

Now, one thing to keep in mind, while most discussions about deep learning focus on developing predictive models, the end to end workflow, including data synthesis, and augmentation, labeling, pre-processing, and deployment to embedded hardware devices, or to the cloud are equally important. Now, keeping this workflow as a reference, let's quickly go through the key challenges.

It's probably clear that you won't get a good model without good data to train it. And that's even more true for signal and time series data. Some work may be needed in these initial steps of the workflow. Now, to train a deep neural network, a large quality data set is required. Not only that, but supervised learning approaches required labeled data sets. Labeling, though repetitive and time consuming, is an essential part of the workflow.

We'll also see more closely how radar and wireless specific expertise and tools play a key role when preparing and pre-processing data to train networks, especially since you won't find quite as much published research in these application areas as you would find in applications based in computer vision, for example.

Now, once you have your model ready, and you wanted to play it, it's often a challenge to write C, and C++, and CUDA code for targeting, embedded platforms, or deploy to the cloud. In this talk, we'll walk you through the various parts of the workflow at the end, and we'll review how we've addressed the pain points. We'll also use our deep learning toolbox to access networks, but as you'll see, all the pre-processing, and labeling, and data synthesis work is network agnostic. And this will work with networks outside MATLAB as well.

Now, as with any engineering challenge, there are multiple trade offs to consider. First, where did you get the data? How do you collect, manage, and label data you bring in from radios and radars? This can be a challenge when you have large amounts of data. It can also be tricky to create the conditions in the field that stress a classifier.

Now, data synthesis can be a great option to train a network if the fidelity can match what the real system will see. We'll look at several examples later where we train with synthesized data and test with data captured from hardware. You'll also need to identify which of the learning techniques is best for your application.

On the ends of the spectrum, you may feed radar or comms IQ data into the network for deep learning. On the other hand, you may extract features that leverage your knowledge of the domain. Now, you may also find that an approach somewhere in between these two extremes is best. For example, time frequency maps, or some of the pre-processing techniques that I'll show you, may provide a better starting point to the network. Also automated feature extractors, like the wavelet scattering algorithm that I'll show, can reduce the amount of data needed for training, and, again, may provide a better starting point for the network.

We'll look at all of these techniques in this webinar, but the bottom line is that the trade offs that you have to consider have to be balanced between the data set size, your domain knowledge, and the compute resources required to realize your solution in a real system. Deep learning falls into the realm of artificial intelligence. And a type of machine learning in which models learn from vast amounts of data. Deep learning is usually implemented using a neural network architecture.

A neural network consists of many layers. We start with an input layer. In radar and wireless applications, this could either be the IQ data, or time frequency maps, or features you extract from the signals. There's also a series of hidden layers that extract features and understand the underlying patterns in the data. And finally there's the output layer, which can perform classification or regression.

Now, there are usually three main steps to develop a deep learning model. You design your network, you train the design network, and you optimize it. Now, for designing the network, you can either design your own network from scratch programmatically, or you can use our deep network designer app. You can also import some reference models, and you can use already trained architectures for your specific deep learning task, or if you or your colleagues work with different deep learning frameworks, you can collaborate in AI ecosystem, through standards and reports we provide.

Now, from a training standpoint, you can train your designed network, and you can also scale your computation to the available resources. For example, this could include physical GPUs available to you that you have on your own site, you can also scale up your computation to GPUs on cloud platforms.

And finally for the optimization, once you train your network, there might be some tuning needed to optimize network performance in terms of its accuracy, and manually selecting a parameter values is tedious. But MATLAB provides you with a tool that does the automatic hyper parameter selection for you.

And notice the two way arrow in the middle here. This signifies that you might have to go back and forth between the three steps in order to get the best model. For example, once you design your network and train it, you might not be able to get the expected accuracy. At that point, you can either change the design or try doing hyper parameter optimization to get the best results.

And, I should mention we also have a tips and tricks documentation page as part of our deep learning toolbox that can help you improve your performance. In fact, we used it extensively to develop the examples I'll go through later. And, I'll also provide the link at the end of the webinar to this great resource for you.

Next, I want to focus on the training step. For each of the examples I go through later in the webinar, training was done in a large set of data. In some cases, the training was done with synthesized data. In others, it was done with data collected via hardware.

This training process can be greatly accelerated with use of a GPU. We can also get an indication of the speed up and the results window, along with the corresponding validation accuracy. Now, this is just a generic example, but you get a sense of how the accuracy is represented. You can also see the elapsed time for the training, and you can also see here the hardware resource that helped accelerate the training process.

This is important because we're gonna have a lot of data, and we want to reduce this time as we're training our network. Now, the performance of the network improves over numerous training operations as its weights are optimized to match the IO associations and the training data.

Notice there are two important and different lines here. In the blue, you see the accuracy of the training data. And that's the portion of your data set materially used to train the network. For example, it really optimizes the values of its weights. The dash black line is the accuracy of validation data. And this is sometimes referred to as the dev set.

It's the data the network hasn't used to train, so it's useful to check how well the model is able to generalize its behavior on data it hasn't seen before. It includes data that's as representative as possible of the actual problem you're trying to solve. Now, you may not have enough of this type of data to use it effectively to train your network, but your decision on when you reached a good enough accuracy will be bound on this metric.

You obviously always expect to do worse on validation than you do on your training data. Now, when you do everything well, then you can hope to have these two as close as possible together.

OK, so, so far we've talked about the different kinds of things you can do when you model radars and communications systems. We've talked about the MATLAB deep learning workflow, and how you can use things like the deep learning toolbox, but you can also use networks outside MATLAB.

I want to now focus on hardware connectivity, and I'll start with software defined radios. We have a range of add-ons, and this is a snapshot from our add-on Explorer that you can bring up in MATLAB, that allows you to bring in install packages, hardware support packages, for some common off the shelf radios. And, when you install these with our communications tool box, you can actually get these connectivity to these radios and configured radios. You can take data off the radios and process them. There's a lot of great examples that go with these.

I'm going to show you, specifically, the Pluto radio, but a lot of what I show you can be done with any of these radios. We also have an add-on package for a platform called the Demorad, which Analog Devices puts out, and for this platform it's a small 24 gigahertz phased array radar. And here it is-- this is me in our atrium, and as I move, notice that you can see the range of changes as I'm moving, and also the angle. My colleague walks across the field of view, you can see his detections in the same thing. So these are pretty low cost ways of connecting the radars and radios to MATLAB directly.

And you can collect your own data, and feed those into deep learning or machine learning networks and classifications. I'm going to show you how some of these things can be used in the examples as we go further into the discussion. , Now imagine taking data off of those hardware platforms.

What do you do with that data? Well, the first thing we want to look at is how we can analyze the signals that come off them, and if we're going to feed them into a deep learning network, or even a machine learning network, we're going to want to do labeling.

So let's start off with a single analysis piece. And to motivate the examples, I want to generate three waveforms. And, for this, I'm going to use our radar waveform analyzer app, which is part of phased array system toolbox. To generate three waveforms, a rectangular waveform, a linear FM waveform, and a phased coded waveform with Barker coding.

OK, so I'm just going through here, and setting up these wave forms, and I'll build up the three, and I'm going to use these in the next set of examples here. So, once I've added them, you can see rectangular, linear, and phase coded, those are my waveform. I can actually configure the different parameters that go with them. I can generate plots for each of them. You can see the different ways to plot, both ambiguity plots, as well as, spectrograms, and spectrums.

What's nice about this is allows me to design the waveform that I'm looking to do interactively. And, when you're done, you have the ability to either export them to Simulink as a block, or as a library of blocks, or you can generate the data that goes to the MATLAB workspace, or MATLAB script to recreate whatever design you've done in the app.

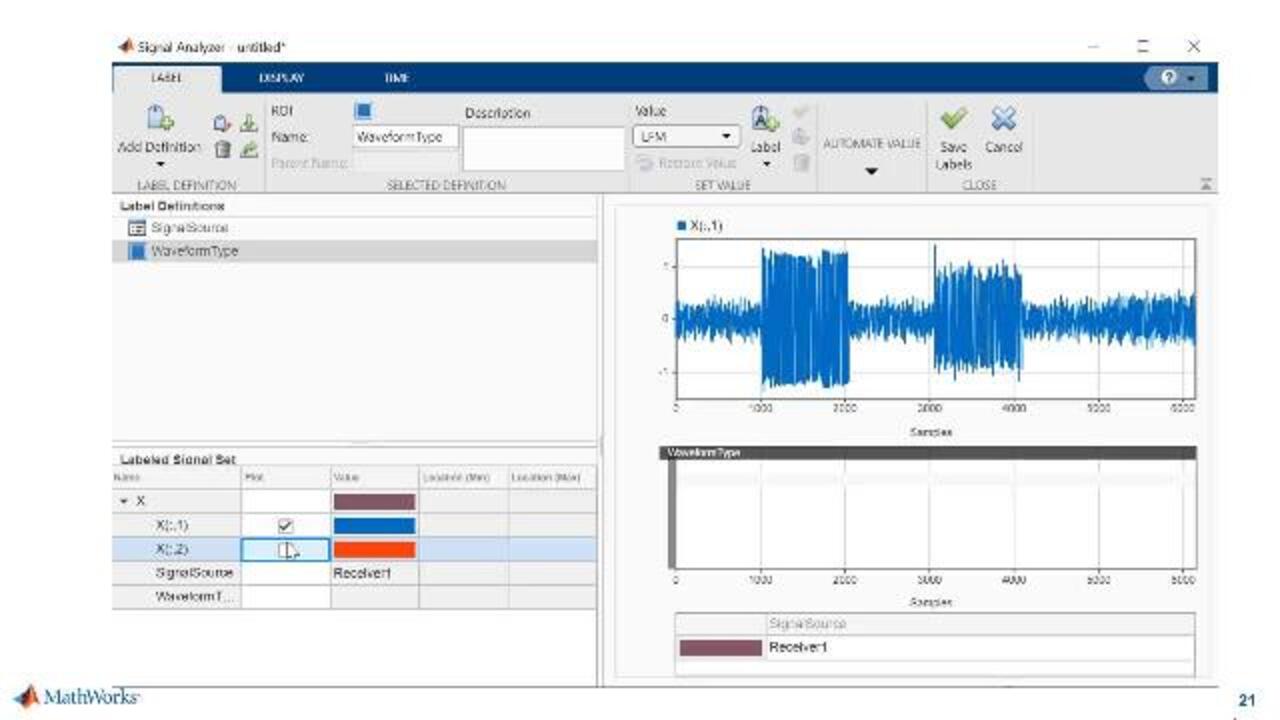

And for this portion, we're going to actually export it to the workspace and what we'll do is, we'll use that data set in our signal analyzer, which is part of our signal processing tool box. Now this app is nice, because it allows us to visually explore the signals that we brought in. Now remember, in this case, the signal is actually one long signal, and it has three waveforms inside the signal.

There's going to be a waveform here, here, as well as, here. Now, it's hard to tell the nature of these waveforms looking at them in the time domain. Let me show you this in the app. So in the app, here, you can see that this is the time domain. When I bring into the spectrum, the signals don't stand out, but when I bring into time frequency, it's very clear that there's three signals that I mentioned here.

This is actually the scalogram. You can see signal one. Signal two, in the middle, and then signal three over at the right. Now this is kind of a simple case just to illustrate the workflow, but imagine having a signal that you didn't know what was in it, and being able to explore, and figure out what's happening from a spectrogram standpoint. It's very valuable to be able to see what the content looks like.

Now that I know that there's three signals, and I can validate that in the spectrogram and the scalogram view, what I want to do now is label that data. And I gonna show you a case of manually labeling the data. So I bring this data into the-- As you can see this is the same data here with the three signals. And I know where the signals are now in time.

So as I use this app, what I'm able to do is, I'm able to go in, the first one I want to label is the LFM waveform, which I know is the first one in the signal. So I can just drag this over here, and manually select the start and stop, and as I move on here that's now labeled as LFM. You can see the waveform type there in the middle of the screen.

I'll find the Barker I can label the plotted signal. And I'm able to generate this guy. You can see the Barker waveform, and then finally, the rectangular waveform, over on the right. I can do the same piece here. Now, this may seem tedious to do if you have lots of data sets, and I'm going to show you what you can do when you have lots of data.

So I have now a set I can label this. You could imagine having many more signals in here that I've gone through and labeled. And now I have this labeled signal set that I can use as the input into a network. Now, what happens when it's more complicated? All right. What happens when I have more signals and it's a little bit harder to actually find where those signals are labeled?

So for this next example, what I'm going to do is, I've actually generated nine waveforms, and I've represented them by received signal one through nine, over on the left. And again, I'm going to bring those into the labeler and for this signal set that I've generated here, I actually have three LFM waveforms, three rectangular waveforms, and three Barker waveforms. And now, instead of going through them manually, what I want to do is use a feature that allows me to write a custom function to label.

So I'm in labeler here, the first thing I'm going to do is add definition. So I'm going to call this ModID, and I'm going to define each of these signals with a categorical, the LFM, rectangular, and Barker. So these will be the labels that I want to apply to each of the signals and I've got nine signals here. And those are all in the labeler right now.

I've written a function called classify, and when I apply them to all nine signals, I'm going to wait for the results to come back, and you can see here, the nine labels were added to nine signals. And as I scroll down now, I'll just pick one of each waveform type. I can plot them here. And you can see that's an LFM waveform. This is a rectangular waveform. And finally a Barker waveform, OK?

Now, I had nine waveforms. It actually went out and found the three LFMs, three rectangulars, and three Barkers, and labeled them directly in here. And when I'm done with this, now, just like on the other one I can save the labeled data set, and use that and come back, and enter it in as an input into one of the networks that I have. It's very powerful.

In this case, the classify function is actually taking the complex signal, and doing a transform on it, and then passing it into a network that I've already trained. And it's actually using that trained network to label the signals, OK. And so, when I'm done, I end up with this label waveforms portion that I can box up, and use outside the app in a deep learning system, OK?

So very powerful. We also have some great functions that help you find signals. So for example, if you're looking for specific instances of a signal, the function would go through in that custom function and find the locations for you. I showed you a case where we did categorical, meaning we just gave a label as the waveform type, but you could also write a function to, for example, return the start and points of each of the signals where they were located in a larger signal, similar to that first example I showed you.

So, a lot of flexibility there in terms of writing custom functions, and that will enable you to go through larger data sets very efficiently.

Now I want to go through the examples that I mentioned, and I've got four examples that I picked that, I think, show a nice range of what some of the tools you can do to synthesize data, both for comms and radar.

And I want to start off with two radar examples, and the first one is going to be focused on radar cross section classification. So let's go through that one to start with. And as kind of the building block for this in the modeling, we're really talking about being able to model targets that is objects it will be in the field of view that a radar would see. And you have a range of different ways to do that.

But what I'll show you here, is looking at angle and frequency dependent on radar cross section. That is, you can define the cross section in a container that includes things like, how it performs at certain frequencies, what it does at different azimuth and elevation angles, and how it RCS pattern changes. You can also put in things like swirling models to get fluctuation.

And the idea here is that you can take some basic building blocks, and put them together, and generate shapes. So for our basic example, we're going to use that radar capability to model targets. And we're just going to start off with some basic shapes. So in this case, we'll have cylinders and cones as the basic shapes, and they will move through the field the view of the radar, and each of these shapes-- This is just one kind of sample here.

But each of the shapes will have a motion as it kind of moves through the field of view the radar. This is how the radar will see it as it's moving. It'll, of course, have its own RCS pattern that, again, varies in this case with aspect angle, but this could be either an azimuth or elevation. And finally, when we synthesize the return of the radar, that is the radar will send a signal out, reflect off of that object, and depending on what its motion is, and how far it is away, and what its what it looks like to the radar, this RCS will change over time as the radar sees it.

We'll try to use that information to classify, so what we've done is, we've generated thousands of samples, thousands of returns, synthetically, where we generate the radar reflections, and we capture those RCS variations over time. Now, in this example that we have, and I'm going to show you the links to each of these examples after the webinar is over.

We started off with a machine learning case in this example, but we've got a lot of requests to actually extend it to include both an SVM application, where we're doing something like machine learning, but also in the deep learning case. Now, note that from a deep learning aspect, this example is pretty simple and wouldn't require the deep learning piece, but it's more about the workflow. The same workflow would apply if you extended the number of shapes, and the amount of data.

But essentially what we're doing is in the example, we can take the wavelet scattering transform to extract features from this system. And the nice thing about this, is we end up with less data than we started with, first of all, because it's pulling out the features, and using those features to bring into the SVM. Now, again, from a workflow standpoint, we also use the wavelet transform, continuous wavelet transform, to generate time frequency maps of these shapes as they're moving over time.

And then fed that into a CNN, in this case SqueezeNet. And the other example that we show here is that we can take those RCS returns and put them into an LSTM network. And so, I just highlight this as a example that you can go through that will show how you can go from a workflow standpoint of either extracting features, or transforming radar data into a time frequency images.

I picked the CWT in this example, but this could have been spectrogram and as many other time frequency maps that you can do with a single processing tool box or wavelet tool box. And feed those directly into a network, in this case SqueezeNet Now, in the case of the LSTM, you were putting in raw data. We could also put in features as well, but the key thing here is that we can see how the network performs in this cases.

And this is really, I just call this to your attention because it's more about the workflow, and you can use this across the board to see how you could simulate radar detections and moving targets and moving objects in the field of view and looking those over time and seeing how they change. Train a network to see what they do, and then give it some test data.

OK, so these other examples, I'll go into a little bit more detail. That was one I want to just highlight, because it does exercise a lot of the workflows that you'll hopefully use in your own system. The next one is more focused on radar micro-Doppler.

So, a lot of the techniques that were used in the previous example for single point scatterers that were moving that had RCS patterns that were varying over time, are still applied in this next example. But here, what we're going to be doing is, we're going to take in multiple scatters and use those multiple scatters to create a micro-Doppler. OK, so imagine the object is moving, but also portions of the object are also moving as well.

You can imagine a person walking and the arm swinging, and a bicycle going, the wheels are spinning, the pedals are moving. I'll show you that in a second. So, for the next example, we're going to use some building blocks to model pedestrians and bicycles. And so we have a pretty easy way to generate a pedestrian model, and this is the back scatter. You can see we put the height, the walking speed, the initial position, and the heading.

And when we run this, and we move the pedestrian, and we look at the returns over time, you can see that we generate a micro-Doppler signature. Now, the same thing for the bicycle, and the bicycle, this is actually a simple function, as well, that defines the spokes, the speed, the initial position, the heading, and the basic information about the gear ratio. Just like on the pedestrian, we're generating micro-Doppler

Now, these are wrapped up in phased array system toolbox as building blocks that you can use directly, but all the fundamental building blocks are there to make your own complex target, for example, a helicopter, or a quad copter, rotating blades, things like this.

The pedestrian, as it turns out, is actually multiple cylinders representing each of the body parts. And in the case of the bicycle, it's actually a collection of single point scatterers. So these will be the building blocks that we use in our next example. So what we'll do is, we'll generate lots of data over here on the left to synthesize bicycle and pedestrian movement. We'll use that to generate data, and we'll take the short-time Fourier transform as our time frequency map to generate an image into the CNN.

And then we'll look at, with our test data, we'll be able to put test data in and say is it a bicycle or pedestrian? The synthesized data for one set of pedestrian, bicycle, and car, if you look at these, it's pretty clear what you're looking at. So you wouldn't need it even a network probably to tell you if it was the straight forward, right, but this gives you an idea of what the time frequency map looks like for the micro-Doppler for pedestrian, bicycle, and car to start with.

In the case where we have a pedestrian and bicyclist, it starts to get a little bit fuzzier, right. You can look at the side here, and in this case, you might be able to see the bicyclist. You can also see the pedestrian there and depending on which one is stronger, you'll see more dominant versions, which ones closer to the radar, things like this.

So, here what you see is, it's clear when they're individually separated, but when you put them together it starts to get a little bit harder to differentiate. And the network will actually be able to figure this out if we train it properly. So we're going to build up a scenario where we have two sets of data.

And we're going to start off with one scene where we have just bicyclists and pedestrians. In the other case, we're going to have bicyclist, pedestrians, and a vehicle. So we'll generate noise from a car in the scene. And the scene combinations will include cases where we have one pedestrian, one bicyclists, one of each, two of each, that is two pedestrians and two bicyclists.

And what we'll do is we'll take the spectrogram for the systems, and we'll scale them to ensure that we have a common range across the objects. And we'll normalize them between zero and one. That'll be the input we put into the network for training and then also for testing. OK, and the key thing here is that one set of data will have no noise from having the vehicles in the scene, and the other one will have the vehicle as well.

When we look at the results of this system, where we're classifying without car noise, we get pretty good results. We have the case of pedestrian, the bicycle, pedestrian plus bicycle, and then two of each. And the network does a pretty good job here, 95%, of figuring out what's happening. And we know the ground truth, so we can compare to the test accuracy of what the network provides and you can see here we've got a good answer.

If we take that same scenario, and we classify the signatures in the presence of car noise, remember, we didn't train initially with car noise to start with, then the test accuracy goes down. And that's probably expected, right? We didn't train in the way that we're having data here. The car noise making a big difference in terms of we see. Now, we can go back in and retrain to CNN by adding car noise to the training set, and then we get something that's much higher, compared to what we had when we didn't train with car noise.

So, the point here is that there's a lot of knobs you can turn to get a better answer, right? This could be generating more test data, making the test data more realistic, the training data more realistic. It could also involve tuning the network and optimizing that. It also could involve changing the way we actually create the features that go into the network, which time frequency map do we put in. That kind of thing.

It's interesting to see. This is another case where we have two bicycle in the case, and the prediction, the network actually got this correct. There's two bicycles, and when you look at the ground truth of each one, it's hard to tell visually here that this is actually two. But the network actually was able to see that this is actually two bicycles in the scene. But it's very hard to tell, I think, if you just looked at these two, and compared to this guy that maybe it would be hard for you to notice that yourself.

I think the other case where the network predicts incorrectly in our case, is that with the car noise case, where we have a bicycle, and the bicycle in the presence of car noise it actually got the prediction wrong. It actually predicted pedestrian, plus bicycle, right.

So here the car closely resembles a bicyclist pedaling, or a pedestrian walking slowly, and so the network returned an answer where the bicyclist is there, along with the pedestrian. When in fact, it was just the bicyclist.

So these are corner cases that you can really work on, and you have a lot of tools at your disposal. You can go back and change the network. You can go back and train with more data, or you can go back in and use a different transform, for example.

Waveform modulation identification. This has gained a lot of traction in the news. You'll see this is part of DARPA challenges, there's Army challenges. We're really looking to see what's the source of the interference, how do we understand what it's looking for. In some cases, it's also in the kind of shared spectrum and being able to manage the spectrum efficiently.

And this is kind of the classic case, right, the radar is operating here. It's an interference source. The interference source may impact the ability to detect something. It may be intentional. It may be interference in the environment totally unintentionally. And the question is what is this interference source. That's kind of the motivation for this kind of application.

We'll, again, go back to the building blocks. I showed you a little bit in the waveform analyzer where we generated those radar waveforms. We also have in the communications suite of products, including communications tool box, 5G tool box, LTE tool box, the ability to generate waveforms. Either standard space waveforms, or non-standard space waveforms that you can generate directly.

And, again, these are building blocks that you can put together. It's important to be able to propagate those waveforms through a complex channel, right, where you can actually have the effects of just as if it was going into an environment. And this is important because part of the ability to use synthesized data in place of actual measured data is to make it high enough fidelity to make sure it's going to be good.

OK, so you can see that a long list of different types of channels that you can put in here. Some are specific to standards, LTE and 5G. Others are more generic, things like rain, gas, and fog, or Longley-Rice, or some of the MIMO channels. These are all ones that can be used directly in with your signals, both for radar and communications.

I showed you the radar waveform analyzer app. This is also something that I think is worth understanding here. It's a great app that allows you to generate waveform types, again, standards based waveforms or non-standard based waveforms. You can go through and add impairments. I showed you the channel models already that are available, a large set of channel models. But you can also go in and customize the types of impairments that the waveforms will see through the system.

And this is great because, again, I keep going back to that theme of being able to create higher fidelity. You can generate the waveforms, it's a waveform generator, and I can build up all the data that goes through here. Just like on the other apps, I can export these to a script or to a MATLAB workspace. Also in this case, I can connect up to hardware that I could pick from a list of hardware test equipment that we support, and directly generate waveforms from this app to feed test equipment. Another great way to generate data for your training networks. We'll show you how this can be used coming up in another example.

So I've got two variance of this example, I want to show you. The first one, we look at radar and comms waveforms. We generate thousands of signals, with random variations and impairments. In this case, we're actually using a Wigner-Ville distribution transform. It's a time frequency transform that does nicely for these types of signals. And that image is what's used to train the network. And we can go into the network, and we can see how, from this confusion matrix, how the signals are identified.

I can focus in on areas where the network got it wrong. And in this case, it's not surprising. There was some confusion on some of the AM waveforms. And, I can zoom in on those and actually look and see, well, what did the network think when it got it right, what did the network think when it got it wrong. This is powerful, because just like in all these examples that I'm talking about, we go back to the same thing.

It's very easy to iterate, try to do more training data, do I give it a different network configuration, do I change the pre-processing and time frequency analysis that I'm doing on the input to the network. These are all knobs you can turn as you go through the system.

We also have a modulation classification with deep learning just for communications waveforms. And it's a similar workflow, but I think there's two main items I want to point out here. Instead of generating time frequency image maps into the system, here, we're actually putting IQ data into the network. And we look at a couple of different architectures of just putting the raw IQ data in.

Sometimes it's as pages and sometimes it's not. And what's interesting is to see how some of the networks actually interpret and use the phase information as part of the network. The other piece that I think is important to point out here, is that we validate it with data collected with a radio.

So take a look at this. This is actually a configuration on my desk and just for the photo I put the radios next to each other. These are the ADI Pluto modules that I talked about earlier. The communications channel on my desk is in a stressful configuration. The radios are right next to each other, but it's about the workflow.

This little app is one that we put on top of the example to show what's happening. You can see here I've got the Pluto radio configured. I can pick the number of frames that I'm running. And when the network gets it correct, I'm actually transmitting the same waveform that I'm predicting. You get a little insight into the IQ values coming in on the receiver, but the thing that I like is also that you can actually see the probability, what the network's thinking.

Now in this example, we sent out a BPSK waveform, and the network is actually correctly identifying it. But you can see the other ones that it's considering here. So, it's a pretty powerful little framework to get started on, and even if you don't use these radios, or you have a much more stressful channel in between the radios, the network is all about the same workflow that you'd be using.

OK, well this brings us to our last example and this last example is focused on RF fingerprinting. And what we're going to do is we're going to use our fingerprinting to identify router impersonators on the network. And this is a wireless LAN network. We're going to synthesize the RF fingerprint between each of these known routers, routers one through three, and observer station. And if it doesn't fall into one of those three connections, we want to characterize an unknown router class, which would be basically anything else in this scenario.

We're going to use our channel impairments, our channel models, and the wireless LAN tool box to generate lots of data to train our network for both known configurations, meaning routers one through three, as well as, unknown configurations, where we have potentially rogue connectors onto the network. What you see here is that these are connected to a router and a mobile hot spot, and after some training the observer receives beacon frames and decodes the Mac address. Also, the observer extracts signals, and uses those signals to classify the RF fingerprint of the source of the beacon frame.

Now, in the case where the Mac address, RF fingerprint matches, as in the case will be for router one, two, and three, then the observer declares that this was a known router. If the Mac address of the beacon is not in the database, and the RF fingerprint doesn't match, then it's labeled as an unknown router. Now, in the case of the impersonator, as this figure shows, what happens is that the evil twin router impersonator replicates the Mac address of a known router, and transmits beacon frames using that Mac address. Then the hacker can jam the original router and force the user to connect with an evil twin.

Now, the observer receives the beacon frames, and decodes the Mac address. Now, if we see it's the correct Mac address, but the RF fingerprint doesn't match, then we know we have somebody maliciously trying to connect up into that network. And for this example, we're using wireless LAN tool box to generate our waveforms, and we're passing those waveforms through complex channels that include channel impairments, as well as RF impairments. And that collection of data is used to generate data for training. That training data is used to train a neural network, and then we're able to develop all of our algorithms to test them out to see if we can detect router impersonation.

And in the example where we synthesize the data, everything works. We can refine our algorithms. We can refine the way the network is trained, the way the network architecture is configured, and we can continue to expand the amount of impairments, both for channel and RF that we add in to make the system more realistic. Now, to test it, we can try simply changing the Mac address, in which case you see on the bottom here that the response is correct, where we see it's not recognized. In the case where we have the correct Mac address is known, but the RF fingerprint is wrong, then we can tell that in addition to just being an unknown address, an unknown device is on the network. We also see that if somebody is trying to maliciously go onto the network.

So that's all the synthesis. And, again, all the workflow is exercised here. So, that when I actually go and do the same thing now with hardware, I have a much higher chance of having it work. So, very similar kind of scenario here, but instead of doing synthesis in the example, where we characterize the RF fingerprints between each of the routers and the observer, we use a Pluto radio to do that work. So we collect lots of data at this fixed location between each of the routers, and, here, we're using the hardware to bring in and capture the RF fingerprint. Lots of different data sets to train the network, just like we did in synthesis, and we get the same result from a training standpoint with the actual hardware.

Now, how do we create the unknown case? Well, in this case, we have a mobile Pluto station, where we can put the radio on a platform that we can move around and collect data. And it turns out, this is what we used to classify our unknown RF fingerprint, because this is something that just could be anywhere in this scenario. And we want to capture lots of data as we move around and get different channels that we create between the mobile platform and the Pluto that's going to connect up to the routers. OK, all unknown classes because it will be different than the one we capture the data in.

But as a summary, I think it's a good workflow because the first portion we can do everything in synthesis. We can also, then, test it on hardware, and we get the same answer between both cases. So, it's a good sign, just like in the previous ModID example, where I can take data from a radio, and put it into the system. Here, I'm actually using data to train the network, collect it from the radio, as well as, to test the network using data collected from radio. So, you may have your own radio, you may have your own systems, and it could be even outside this application, but the concept of modeling a complex system where there's channels that are moving through, whether it's radar or communications, gives you a sense of how it could match what you actually see in your physical system.

OK, so now that we've gone through the examples, I want to remind you again of this great resource that we have, for deep learning tips and tricks. It really helps you choose your architecture, as well as, configure, and tune it for different applications, beyond just imaging. It includes signals as well. Here's the website up at the top of the page that I'm showing you. And it's also probably a good time now to summarize what we've talked about.

We discussed the concept of the fact that the models are only as good as the trading data that we put in and we showed how to label data directly that you build. We also talked about synthesizing data, and how these domain specific tools can help you model radar and communication systems. One of the things that I didn't mention, but probably was obvious, is that when you synthesize data, in addition to being able to generate lots of data sets that are high fidelity, you can also avoid that labeling challenge. Because as you synthesize your own data, it's pretty easy to label it as you generate it, so that also makes the labeling process much, much easier.

We discussed the concept of collaborating in the AI ecosystem, and all the examples that I used and I showed you in this webinar we're based on our deep learning tool box. But our deep learning tool box interfaces into [? OnyX, ?] which gives you an open connection into the ecosystem for artificial intelligence. And then finally, we talked about deployment and scaling to various platforms. Now, in this webinar, basically I've described some areas where we can take data from the hardware side, that's kind of on the left side of the diagram that I'm showing you here, but in the end, we can deploy these kinds of systems, either to the cloud, or to embedded platforms, or deep use.

The examples that I showed you included communications toolbox and phased array system toolbox, but you also saw that last example included the wireless LAN protocol, which was part of the wireless LAN toolbox. All the signal processing, labeling, and feature extraction was done with our wavelet toolbox, and signal processing toolbox. The connection to the SVM was through stats and machine learning toolbox, and all of our deep learning networks with deep learning toolbox.

I didn't show these in as much detail, I kind of alluded to them, but the 5G toolbox and LT toolbox are also quite useful if you're looking at wireless communications, and you want to synthesize data, the waveforms that go with them, and all the physical layer modeling for all these types of systems. There's a lot of great resources to get started with. The examples that I showed you are available online. We go through the detail code and how to synthesize the data that we used in the training.

We talk about the network architecture that we use in all the cases. And then we show the results for each one of these, and for all these, if you have the tools you can easily recreate all the demos that I showed you. I include the websites here. I also want to call your attention to a white paper that we have on deep learning that walks through a lot of what I talked about in more detail. That's free to download.

And then finally, our page for deep learning for signal processing. A lot of the signal processing concepts that I showed here, both for feature extraction, pre-processing, the wavelet processing, labeling, and analyzing signals in the signal analyzer app are all captured on this page.

So I encourage you to take a look at that. This brings me to the end of a webinar. Thank you very much, again, for your time.