Keyword Spotting in Noise Code Generation on Raspberry Pi

This example demonstrates code generation for keyword spotting using a Bidirectional Long Short-Term Memory (BiLSTM) network and mel frequency cepstral coefficient (MFCC) feature extraction on Raspberry Pi®. MATLAB® Coder™ with Deep Learning Support enables the generation of a standalone executable (.elf) file on Raspberry Pi. Communication between MATLAB (.mlx) file and the generated executable file occurs over asynchronous User Datagram Protocol (UDP). The incoming speech signal is displayed using a timescope. A mask is shown as a blue rectangle surrounding spotted instances of the keyword, YES. For more details on MFCC feature extraction and deep learning network training, visit Keyword Spotting in Noise Using MFCC and LSTM Networks.

Example Requirements

MATLAB Coder Interface for Deep Learning Support Package

ARM® processor that supports the NEON extension

ARM Compute Library version 20.02.1 (on the target ARM hardware)

Environment variables for the compilers and libraries

For supported versions of libraries and for information about setting up environment variables, see Prerequisites for Deep Learning with MATLAB Coder (MATLAB Coder).

Pretrained Network Keyword Spotting Using MATLAB and Streaming Audio from Microphone

The sample rate of the pretrained network is 16 kHz. Set the window length to 512 samples, with an overlap length of 384 samples, and a hop length defined as the difference between the window and overlap lengths. Define the rate at which the mask is estimated. A mask is generated once for every numHopsPerUpdate audio frames.

fs = 16e3; windowLength = 512; overlapLength = 384; hopLength = windowLength - overlapLength; numHopsPerUpdate = 16; maskLength = hopLength * numHopsPerUpdate;

Create an audioFeatureExtractor object to perform MFCC feature extraction.

afe = audioFeatureExtractor(SampleRate=fs, ... Window=hann(windowLength,'periodic'), ... OverlapLength=overlapLength, ... mfcc=true, ... mfccDelta=true, ... mfccDeltaDelta=true);

Download and load the pretrained network, as well as the mean (M) and the standard deviation (S) vectors used for feature standardization.

downloadFolder = matlab.internal.examples.downloadSupportFile("audio/examples","kwslstm.zip"); dataFolder = './'; netFolder = fullfile(dataFolder,"KeywordSpotting"); unzip(downloadFolder,netFolder) load(fullfile(netFolder,'KWSNet.mat'),"KWSNet","M","S");

Call generateMATLABFunction on the audioFeatureExtractor object to create the feature extraction function.

generateMATLABFunction(afe,'generateKeywordFeatures',IsStreaming=true);Define an Audio Device Reader System object™ to read audio from your microphone. Set the frame length equal to the hop length. This enables the computation of a new set of features for every new audio frame received from the microphone.

frameLength = hopLength; adr = audioDeviceReader(SampleRate=fs, ... SamplesPerFrame=frameLength, ... OutputDataType='single');

Create a Time Scope to visualize the speech signals and estimated mask.

scope = timescope(SampleRate=fs, ... TimeSpanSource='property', ... TimeSpan=5, ... TimeSpanOverrunAction='Scroll', ... BufferLength=fs*5*2, ... ShowLegend=true, ... ChannelNames={'Speech','Keyword Mask'}, ... YLimits=[-1.2 1.2], ... Title='Keyword Spotting');

Initialize a buffer for the audio data, a buffer for the computed features, and a buffer to plot the input audio and the output speech mask.

dataBuff = dsp.AsyncBuffer(windowLength); featureBuff = dsp.AsyncBuffer(numHopsPerUpdate); plotBuff = dsp.AsyncBuffer(numHopsPerUpdate*windowLength);

Perform keyword spotting on speech received from your microphone. To run the loop indefinitely, set timeLimit to Inf. To stop the simulation, close the scope.

show(scope); timeLimit = 20; tic while toc < timeLimit && isVisible(scope) data = adr(); write(dataBuff,data); write(plotBuff,data); frame = read(dataBuff,windowLength,overlapLength); features = generateKeywordFeatures(frame,fs); write(featureBuff,features.'); if featureBuff.NumUnreadSamples == numHopsPerUpdate featureMatrix = read(featureBuff); featureMatrix(~isfinite(featureMatrix)) = 0; featureMatrix = (featureMatrix - M)./S; [scores, state] = predict(KWSNet,featureMatrix); KWSNet.State = state; [~,v] = max(scores,[],2); v = double(v) - 1; v = mode(v); predictedMask = repmat(v,numHopsPerUpdate*hopLength,1); data = read(plotBuff); scope([data,predictedMask]); drawnow limitrate; end end hide(scope)

The helperKeywordSpottingRaspi supporting function encapsulates the feature extraction and network prediction process demonstrated previously. To make feature extraction compatible with code generation, feature extraction is handled by the generated generateKeywordFeatures function. To make the network compatible with code generation, the supporting function uses the coder.loadDeepLearningNetwork (MATLAB Coder) function to load the network.

The supporting function uses a dsp.UDPReceiver System object to receive the captured audio from MATLAB and uses a dsp.UDPSender System object to send the input speech signal along with the estimated mask predicted by the network to MATLAB. Similarly, the MATLAB live script uses the dsp.UDPSender System object to send the captured speech signal to the executable running on Raspberry Pi and the dsp.UDPReceiver System object to receive the speech signal and estimated mask from Raspberry Pi.

Generate Executable on Raspberry Pi

Replace the hostIPAddress with your machine's address. Your Raspberry Pi sends the input speech signal and estimated mask to the specified IP address.

hostIPAddress = coder.Constant('172.21.19.160');Create a code generation configuration object to generate an executable program. Specify the target language as C++.

cfg = coder.config('exe'); cfg.TargetLang = 'C++';

Create a configuration object for deep learning code generation with the ARM compute library that is on your Raspberry Pi. Specify the architecture of the Raspberry Pi and attach the deep learning configuration object to the code generation configuration object.

dlcfg = coder.DeepLearningConfig('arm-compute'); dlcfg.ArmArchitecture = 'armv7'; dlcfg.ArmComputeVersion = '20.02.1'; cfg.DeepLearningConfig = dlcfg;

Use the Raspberry Pi Blockset function, raspi, to create a connection to your Raspberry Pi. In the following code, replace:

raspinamewith the name of your Raspberry Pipiwith your user namepasswordwith your password

r = raspi('raspiname','pi','password');

Create a coder.hardware (MATLAB Coder) object for Raspberry Pi and attach it to the code generation configuration object.

hw = coder.hardware('Raspberry Pi');

cfg.Hardware = hw;Specify the build folder on the Raspberry Pi.

buildDir = '~/remoteBuildDir';

cfg.Hardware.BuildDir = buildDir;Generate the C++ main file required to produce the standalone executable.

cfg.GenerateExampleMain = 'GenerateCodeAndCompile';Generate C++ code for helperKeywordSpottingRaspi on your Raspberry Pi.

codegen -config cfg helperKeywordSpottingRaspi -args {hostIPAddress} -report

Perform Keyword Spotting Using Deployed Code

Create a command to open the helperKeywordSpottingRaspi application on Raspberry Pi. Use system to send the command to your Raspberry Pi.

applicationName = 'helperKeywordSpottingRaspi'; applicationDirPaths = raspi.utils.getRemoteBuildDirectory('applicationName',applicationName); targetDirPath = applicationDirPaths{1}.directory; exeName = strcat(applicationName,'.elf'); command = ['cd ',targetDirPath,'; ./',exeName,' &> 1 &']; system(r,command);

Create a dsp.UDPSender System object to send audio captured in MATLAB to your Raspberry Pi. Update the targetIPAddress for your Raspberry Pi. Raspberry Pi receives the captured audio from the same port using the dsp.UDPReceiver System object.

targetIPAddress = '172.29.252.166';

UDPSend = dsp.UDPSender(RemoteIPPort=26000,RemoteIPAddress=targetIPAddress);Create a dsp.UDPReceiver System object to receive speech data and the predicted speech mask from your Raspberry Pi. Each UDP packet received from the Raspberry Pi consists of maskLength mask and speech samples. The maximum message length for the dsp.UDPReceiver object is 65507 bytes. Calculate the buffer size to accommodate the maximum number of UDP packets.

sizeOfFloatInBytes = 4; speechDataLength = maskLength; numElementsPerUDPPacket = maskLength + speechDataLength; maxUDPMessageLength = floor(65507/sizeOfFloatInBytes); numPackets = floor(maxUDPMessageLength/numElementsPerUDPPacket); bufferSize = numPackets*numElementsPerUDPPacket*sizeOfFloatInBytes; UDPReceive = dsp.UDPReceiver(LocalIPPort=21000, ... MessageDataType="single", ... MaximumMessageLength=1+numElementsPerUDPPacket, ... ReceiveBufferSize=bufferSize);

Spot the keyword as long as time scope is open or until the time limit is reached. To stop the live detection before the time limit is reached, close the time scope.

tic; show(scope); timelimit = 20; while toc < timelimit && isVisible(scope) x = adr(); UDPSend(x); data = UDPReceive(); if ~isempty(data) mask = data(1:maskLength); dataForPlot = data(maskLength + 1 : numElementsPerUDPPacket); scope([dataForPlot,mask]); end drawnow limitrate; end

Release the system objects and terminate the standalone executable.

hide(scope) release(UDPSend) release(UDPReceive) release(scope) release(adr) stopExecutable(codertarget.raspi.raspberrypi,exeName)

Evaluate Execution Time Using Alternative PIL Function Workflow

To evaluate execution time taken by standalone executable on Raspberry Pi, use a PIL (processor-in-loop) workflow. To perform PIL profiling, generate a PIL function for the supporting function profileKeywordSpotting. The profileKeywordSpotting is equivalent to helperKeywordSpottingRaspi, except that the former returns the speech and predicted speech mask while the latter sends the same parameters using UDP. The time taken by the UDP calls is less than 1 ms, which is relatively small compared to the overall execution time.

Create a code generation configuration object to generate the PIL function.

cfg = coder.config('lib','ecoder',true); cfg.VerificationMode = 'PIL';

Set the ARM compute library and architecture.

dlcfg = coder.DeepLearningConfig('arm-compute'); cfg.DeepLearningConfig = dlcfg ; cfg.DeepLearningConfig.ArmArchitecture = 'armv7'; cfg.DeepLearningConfig.ArmComputeVersion = '20.02.1';

Set up the connection with your target hardware.

hw = coder.hardware('Raspberry Pi');

cfg.Hardware = hw;Set the build directory and target language.

buildDir = '~/remoteBuildDir'; cfg.Hardware.BuildDir = buildDir; cfg.TargetLang = 'C++';

Enable profiling and generate the PIL code. A MEX file named profileKeywordSpotting_pil is generated in your current folder.

cfg.CodeExecutionProfiling = true; codegen -config cfg profileKeywordSpotting -args {pinknoise(hopLength,1,'single')} -report

Evaluate Raspberry Pi Execution Time

Call the generated PIL function multiple times to get the average execution time.

numPredictCalls = 10; totalCalls = numHopsPerUpdate * numPredictCalls; x = pinknoise(hopLength,1,'single'); for k = 1:totalCalls [maskReceived,inputSignal,plotFlag] = profileKeywordSpotting_pil(x); end

Terminate the PIL execution.

clear profileKeywordSpotting_pilGenerate an execution profile report to evaluate execution time.

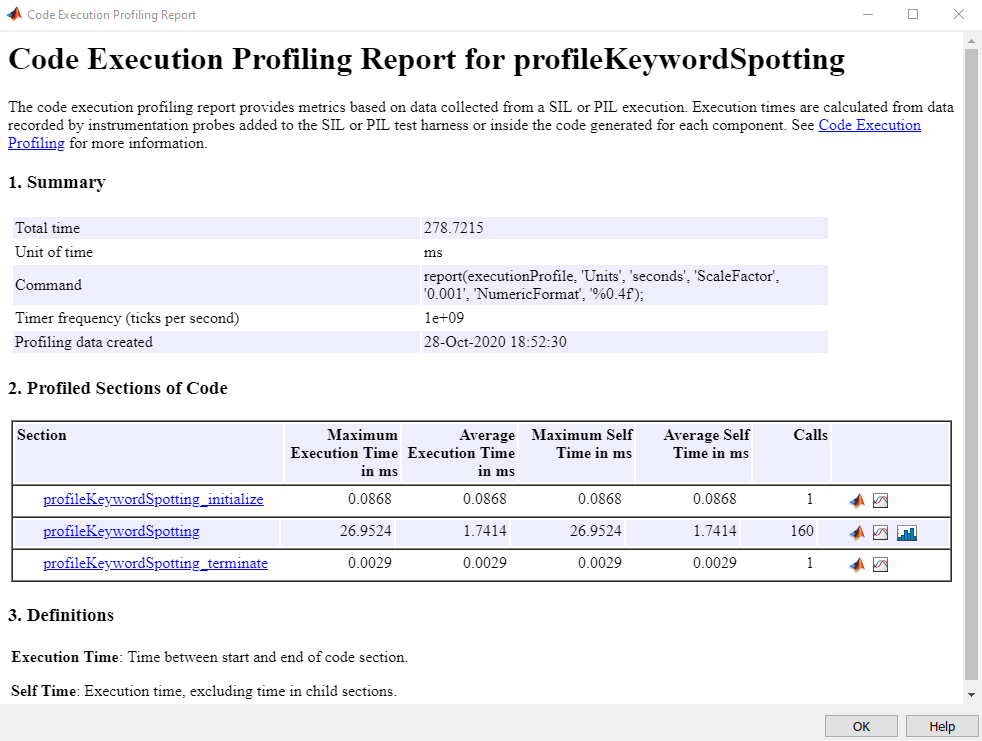

executionProfile = getCoderExecutionProfile('profileKeywordSpotting'); report(executionProfile, ... 'Units','Seconds', ... 'ScaleFactor','1e-03', ... 'NumericFormat','%0.4f')

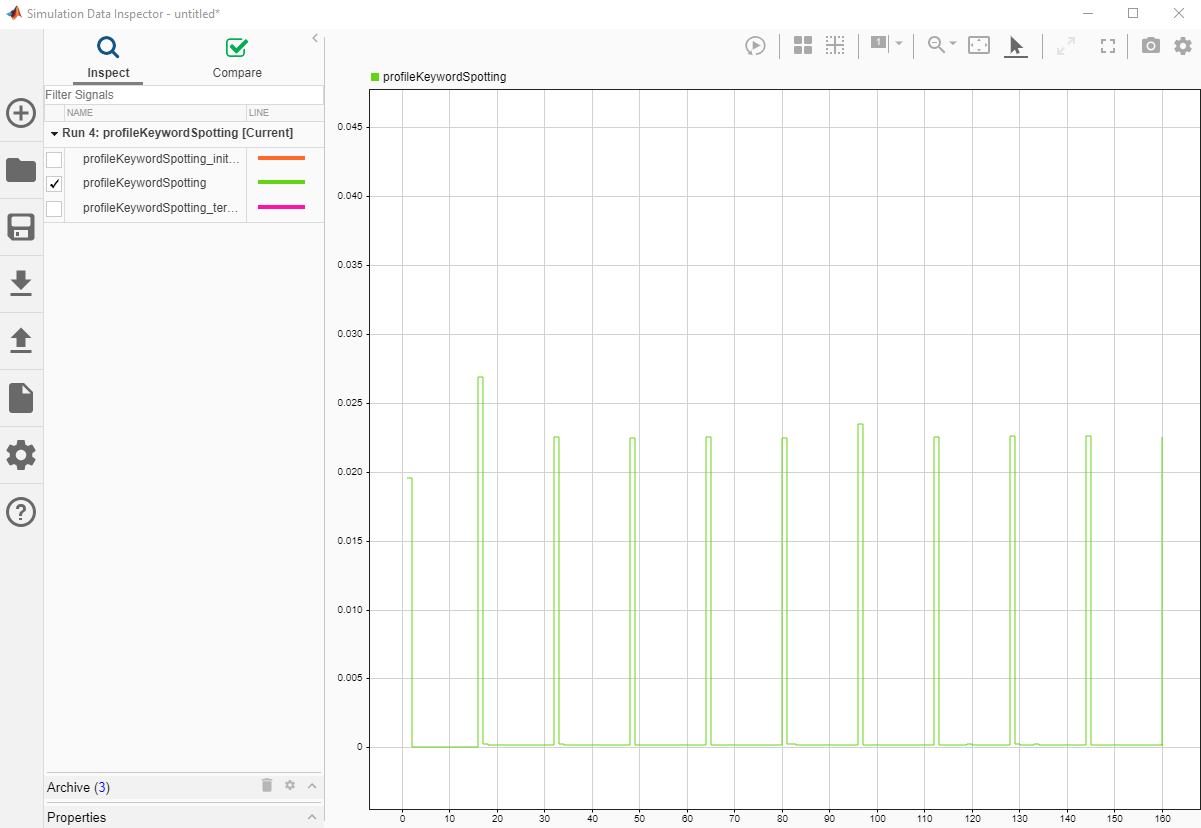

Plot the Execution Time of each frame from the generated report.

Processing of the first frame took ~20 ms due to initialization overhead costs. The spikes in the time graph at every 16th frame (numHopsPerUpdate) correspond to the computationally intensive predict function called every 16th frame. The maximum execution time is ~30 ms, which is below the 128 ms budget for real-time streaming. The performance is measured on Raspberry Pi 4 Model B Rev 1.1.