detectspeechnn

Syntax

Description

roi = detectspeechnn(audioIn,fs,Name=Value)detectspeechnn(audioIn,fs,MergeThreshold=0.5) merges speech regions

that are separated by 0.5 seconds or less.

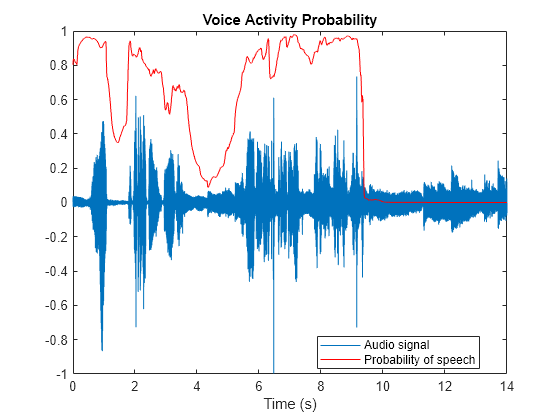

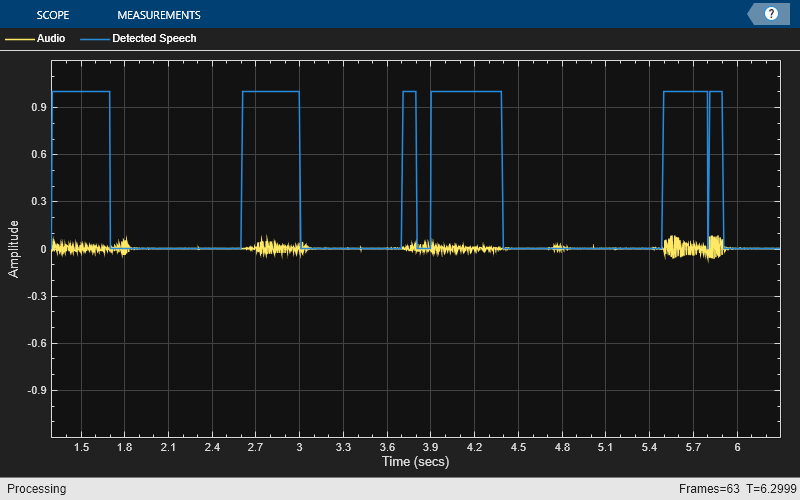

detectspeechnn(___) with no output arguments plots the

input signal and the detected speech regions.

This function requires both Audio Toolbox™ and Deep Learning Toolbox™.

Examples

Input Arguments

Name-Value Arguments

Output Arguments

Algorithms

References

[1] Ravanelli, Mirco, et al. SpeechBrain: A General-Purpose Speech Toolkit. arXiv, 8 June 2021. arXiv.org, http://arxiv.org/abs/2106.04624