このページの内容は最新ではありません。最新版の英語を参照するには、ここをクリックします。

マルチワード フレーズを使用したテキスト データの解析

この例では、n-gram 頻度カウントを使用してテキストを解析する方法を示します。

n-gram は 個の連続する単語のタプルです。たとえば、バイグラム ( の場合) は、"heavy rainfall" などの連続した単語のペアです。ユニグラム ( の場合) は 1 つの単語です。bag-of-n-grams モデルは、異なる n-gram が文書コレクションに出現する回数を記録します。

bag-of-n-grams モデルを使用すると、元のテキスト データの語順に関するより多くの情報を保持できます。たとえば、bag-of-n-grams モデルは、"heavy rainfall" や "thunderstorm winds" など、テキスト内に出現する短いフレーズをキャプチャするのにより適しています。

bag-of-n-grams モデルを作成するには、bagOfNgrams を使用します。wordcloud や fitlda などの他の Text Analytics Toolbox 関数に bagOfNgrams オブジェクトを入力できます。

テキスト データの読み込みと抽出

サンプル データを読み込みます。ファイル factoryReports.csv には、各イベントの説明テキストとカテゴリカル ラベルを含む工場レポートが格納されています。空レポートの行を削除します。

filename = "factoryReports.csv"; data = readtable(filename,TextType="string");

table からテキスト データを抽出し、最初のいくつかのレポートを表示します。

textData = data.Description; textData(1:5)

ans = 5×1 string

"Items are occasionally getting stuck in the scanner spools."

"Loud rattling and banging sounds are coming from assembler pistons."

"There are cuts to the power when starting the plant."

"Fried capacitors in the assembler."

"Mixer tripped the fuses."

解析用のテキスト データの準備

解析に使用できるように、テキスト データをトークン化して前処理する関数を作成します。例の最後にリストされている関数 preprocessText は、以下の手順を実行します。

lowerを使用してテキスト データを小文字に変換する。tokenizedDocumentを使用してテキストをトークン化する。erasePunctuationを使用して句読点を消去する。removeStopWordsを使用して、ストップ ワード ("and"、"of"、"the" など) のリストを削除する。removeShortWordsを使用して、2 文字以下の単語を削除する。removeLongWordsを使用して、15 文字以上の単語を削除する。normalizeWordsを使用して単語をレンマ化する。

この例の前処理関数 preprocessText を使用して、テキスト データを準備します。

documents = preprocessText(textData); documents(1:5)

ans =

5×1 tokenizedDocument:

6 tokens: item occasionally get stuck scanner spool

7 tokens: loud rattling bang sound come assembler piston

4 tokens: cut power start plant

3 tokens: fry capacitor assembler

3 tokens: mixer trip fuse

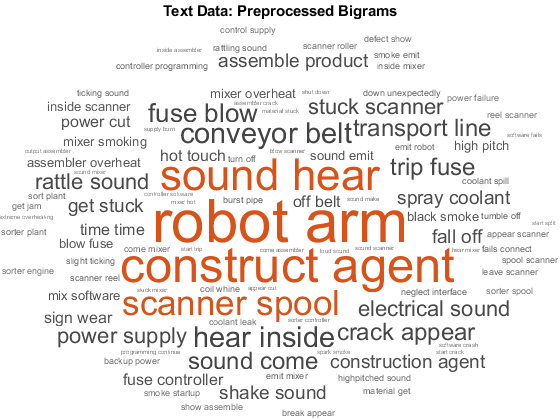

バイグラムのワード クラウドの作成

最初に bagOfNgrams を使用して bag-of-n-grams モデルを作成し、次にそのモデルを wordcloud に入力して、バイグラムのワード クラウドを作成します。

長さ 2 の n-gram (バイグラム) をカウントするには、bagOfNgrams を既定のオプションで使用します。

bag = bagOfNgrams(documents)

bag =

bagOfNgrams with properties:

Counts: [480×921 double]

Vocabulary: ["item" "occasionally" "get" "stuck" "scanner" "loud" "rattling" "bang" "sound" "come" "assembler" "cut" "power" "start" "fry" "capacitor" "mixer" "trip" "burst" "pipe" … ]

Ngrams: [921×2 string]

NgramLengths: 2

NumNgrams: 921

NumDocuments: 480

ワード クラウドを使用して bag-of-n-grams モデルを可視化します。

figure

wordcloud(bag);

title("Text Data: Preprocessed Bigrams")

トピック モデルを bag-of-n-grams に当てはめる

潜在的ディリクレ配分 (LDA) モデルは、文書のコレクションに内在するトピックを発見し、トピック内の単語の確率を推測するトピック モデルです。

fitlda を使用して、10 個のトピックをもつ LDA トピック モデルを作成します。この関数は、n-gram を単一の単語として扱って、LDA モデルを当てはめます。

mdl = fitlda(bag,10,Verbose=0);

最初の 4 つのトピックをワード クラウドとして可視化します。

figure tiledlayout("flow"); for i = 1:4 nexttile wordcloud(mdl,i); title("LDA Topic " + i) end

ワード クラウドでは、LDA トピック内で共通して共起するバイグラムが強調されています。この関数は、指定された LDA トピックについて、バイグラムをその確率に応じたサイズでプロットします。

長いフレーズを使用したテキストの解析

より長いフレーズを使用してテキストを解析するには、bagOfNgrams の NGramLengths オプションをより大きな値に指定します。

長いフレーズを扱う場合、モデル内にストップ ワードを保持しておくと便利な場合があります。たとえば、"is not happy" というフレーズを検出するには、ストップ ワード "is" と "not" をモデルに保持します。

テキストを前処理します。erasePunctuation を使用して句読点を消去し、tokenizedDocument を使用してトークン化します。

cleanTextData = erasePunctuation(textData); documents = tokenizedDocument(cleanTextData);

長さ 3 の n-gram (トリグラム) をカウントするには、bagOfNgrams を使用し、NGramLengths を 3 に指定します。

bag = bagOfNgrams(documents,NGramLengths=3);

ワード クラウドを使用して bag-of-n-grams モデルを可視化します。トリグラムのワード クラウドは、個々の単語のコンテキストをより適切に示します。

figure

wordcloud(bag);

title("Text Data: Trigrams")

topkngrams を使用して、上位 10 個のトリグラムとその頻度カウントを表示します。

tbl = topkngrams(bag,10)

tbl=10×3 table

Ngram Count NgramLength

__________________________________ _____ ___________

"in" "the" "mixer" 14 3

"in" "the" "scanner" 13 3

"blown" "in" "the" 9 3

"the" "robot" "arm" 7 3

"stuck" "in" "the" 6 3

"is" "spraying" "coolant" 6 3

"from" "time" "to" 6 3

"time" "to" "time" 6 3

"heard" "in" "the" 6 3

"on" "the" "floor" 6 3

例の前処理関数

関数 preprocessText は、以下の手順を順番に実行します。

lowerを使用してテキスト データを小文字に変換する。tokenizedDocumentを使用してテキストをトークン化する。erasePunctuationを使用して句読点を消去する。removeStopWordsを使用して、ストップ ワード ("and"、"of"、"the" など) のリストを削除する。removeShortWordsを使用して、2 文字以下の単語を削除する。removeLongWordsを使用して、15 文字以上の単語を削除する。normalizeWordsを使用して単語をレンマ化する。

function documents = preprocessText(textData) % Convert the text data to lowercase. cleanTextData = lower(textData); % Tokenize the text. documents = tokenizedDocument(cleanTextData); % Erase punctuation. documents = erasePunctuation(documents); % Remove a list of stop words. documents = removeStopWords(documents); % Remove words with 2 or fewer characters, and words with 15 or greater % characters. documents = removeShortWords(documents,2); documents = removeLongWords(documents,15); % Lemmatize the words. documents = addPartOfSpeechDetails(documents); documents = normalizeWords(documents,Style="lemma"); end

参考

tokenizedDocument | bagOfWords | removeStopWords | erasePunctuation | removeLongWords | removeShortWords | bagOfNgrams | normalizeWords | topkngrams | fitlda | ldaModel | wordcloud | addPartOfSpeechDetails