MDP robot grid-world example

Applies value iteration to learn a policy for a Markov Decision Process (MDP) -- a robot in a grid world.

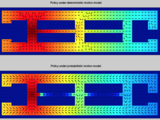

The world is freespaces (0) or obstacles (1). Each turn the robot can move in 8 directions, or stay in place. A reward function gives one freespace, the goal location, a high reward. All other freespaces have a small penalty, and obstacles have a large negative reward. Value iteration is used to learn an optimal 'policy', a function that assigns a

control input to every possible location.

video at https://youtu.be/gThGerajccM

This function compares a deterministic robot, one that always executes movements perfectly, with a stochastic robot, that has a small probability of moving +/-45degrees from the commanded move. The optimal policy for a stochastic robot avoids narrow passages and tries to move to the center of corridors.

From Chapter 14 in 'Probabilistic Robotics', ISBN-13: 978-0262201629, http://www.probabilistic-robotics.org

Aaron Becker, March 11, 2015

引用

Aaron T. Becker's Robot Swarm Lab (2024). MDP robot grid-world example (https://www.mathworks.com/matlabcentral/fileexchange/49992-mdp-robot-grid-world-example), MATLAB Central File Exchange. 取得済み .

MATLAB リリースの互換性

プラットフォームの互換性

Windows macOS Linuxカテゴリ

- Robotics and Autonomous Systems > Robotics System Toolbox >

- Engineering > Electrical and Computer Engineering > Robotics >

タグ

謝辞

ヒントを与えたファイル: Markov Decision Process (MDP) Algorithm, Kilobot Swarm Control using Matlab + Arduino

Community Treasure Hunt

Find the treasures in MATLAB Central and discover how the community can help you!

Start Hunting!| バージョン | 公開済み | リリース ノート | |

|---|---|---|---|

| 1.0.0.0 | added link to video https://youtu.be/gThGerajccM |